Course Introduction

Why Study Architecture?

Ability to write efficient programs

Why does this program run so much faster when the data is sorted?

Why does this program run so much slower than this one?

Needed for some areas

- Operating systems

- Compilers/interpreters

- Parallel computing

- Embedded systems

- Games

- Security

Remove the "Magic"

After this class you should have an understanding of how computers really work, and not view them as "black boxes".

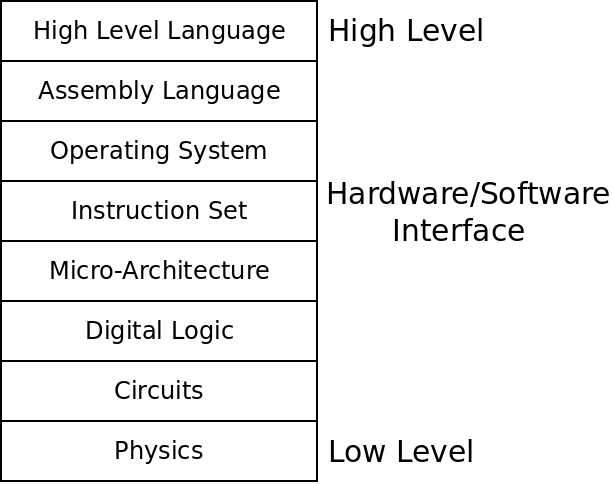

Levels of Abstraction

Computers are built in multiple layers of abstraction. These allow us to program them more easily, but make understanding the whole machine more difficult.

In this class, we will study circuits, digital logic, micro-architecture, the instruction set and assembly language programming.

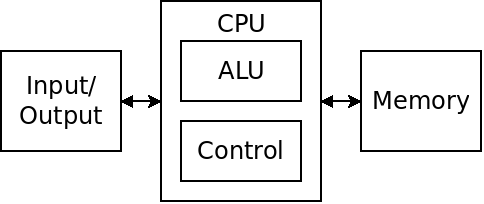

The von Neumann Architecture

CPU

The central processing unit which is responsible for directing all other hardware and carrying out the program to be executed.

ALU

The arithmetic logic unit is the part of the CPU that actually carries out computations such as addition, and, or etc.

Control

The control refers to the part of the CPU responsible for keeping track of where in a program we are, what the ALU is doing, and keeping track of various status conditions.

Memory

Memory refers to "main" memory - not a hard drive. Memory stores information to be used by the processor.

Input/Output

Devices such as mouse, keyboard, graphics card, hard drive etc.

The big idea behind the von Neumann model is that the instructions which comprise the program are stored as data inside the computer's memory. This moved us away from the idea of fixed-purpose hardware to general-purpose computers.

Design Goals

All computer systems are capable of computing the same things if given enough time and memory. The set of computations which are possible on the first computer you owned are the same as those on a super-computer.

The various computer designs we will evaluate do differ in other ways:

- Performance

- Power consumption

- Size

- Cost

- Reliability

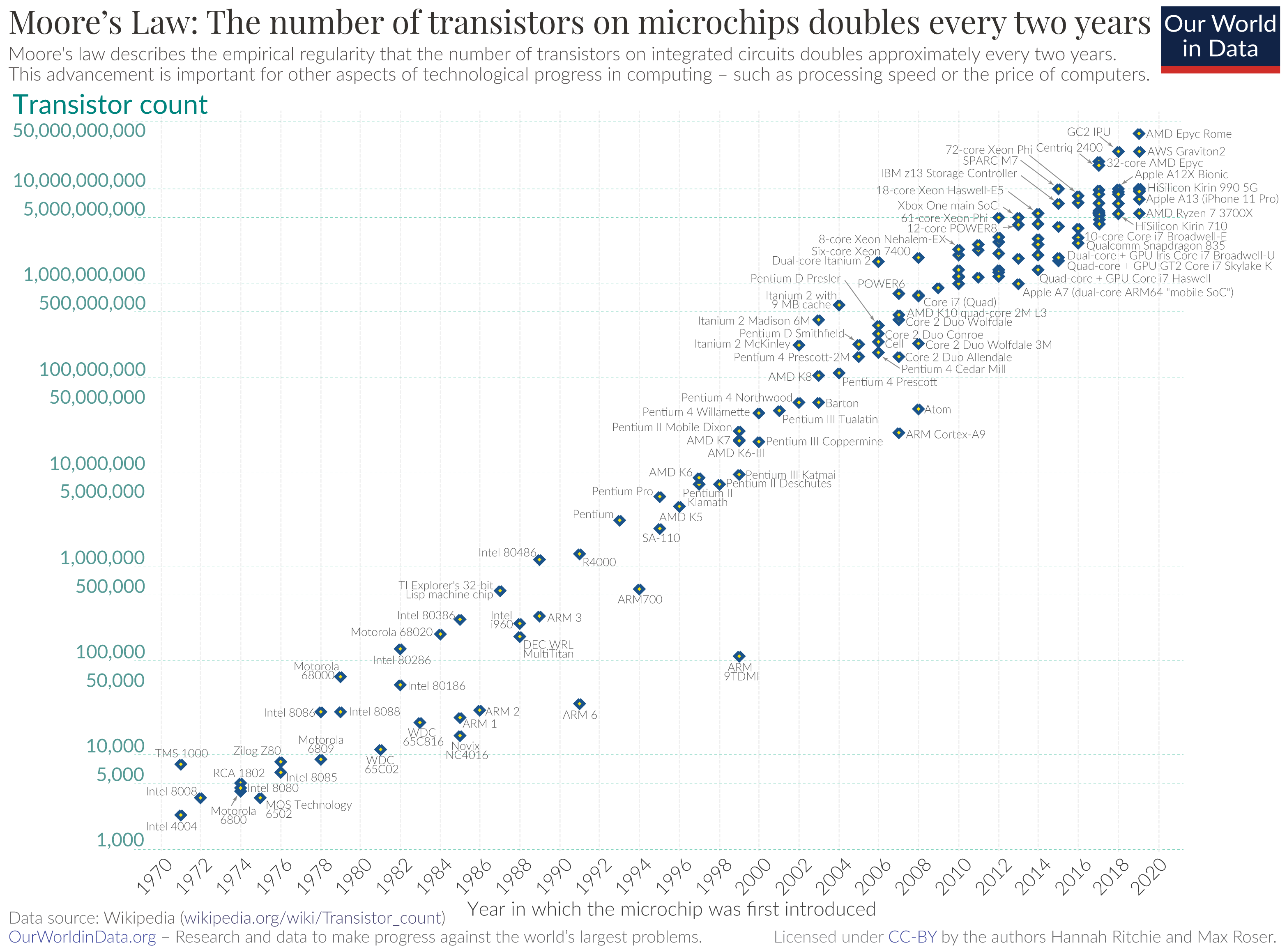

Moore's Law

Moore's law is an empirical rule, proposed by Gordon Moore in 1965, which says that the number of transistors in a fixed sized integrated circuit doubles every two years.

The speed of a computer chip is partly determined by the distance electricity needs to travel through it, so Moore's law has had the effect of providing exponentially faster computers over the years.

Moore's Law in Intel Chips - credit Wikimedia

Because of Moore's law, engineers have given us astounding performance gains "for free". The software world has taken advantage of this by creating higher-level, more abstract layers of software.

The End of Moore's Law

Moore's law cannot continue indefinitely. We are approaching the physical limits on the size of transistors - transistors are now only a few atoms wide. We have been behind the pace proposed by Moore for several years now and in 2019 Nvidia's CEO declared that Moore's law had ended. It is likely that we will continue to see better hardware, but at a slower pace and a higher cost.

This will make it all the more important to be able to write efficient code, and any performance improvements will have to be made in the realm of computer science.

In the words of Agner Fog on his CPU blog:

“The biggest potential for improved performance is now, as I see it, on the software side. Software producers have been quick to utilize the exponentially increasing power of modern computers that has been provided thanks to Moore's law. The software industry has taken advantage of the exponentially growing computing power and made use of more and more advanced development tools and software frameworks. These high-level development tools and frameworks have made it possible to develop new software products faster, but at the cost of consuming more processing power in the end product. Many of today's software products are quite wasteful in their excessive consumption of hardware computing power.”

The Game Boy Advance

We will use the Game Boy Advance as a running case study in this course. We will study the hardware of the system in some detail as well as write programs for it in C and also assembly.

GBA specs:

- 16.78 MHz ARM CPU (about 60 times slower than a 1 GHz processor)

- 240 x 160 pixel screen

- 384 kilobytes of memory (about 2000 times less than 1 Gig of memory)

The GBA has some things which make programming it different from most computers:

- The relatively low performance requires a careful eye to efficiency.

- There is no operating system. Your program is the only thing running, on the hardware, so you have full control.

- There is no "API". You interact with the hardware not by calling functions, but by writing special memory locations.

- There is unique hardware for dealing with graphics and sounds. There are no drivers, so we will have to interact with this hardware directly.